Operational Summary

A coordinated narrative emerged on February 25, 2026, and persisted through April 16, 2026, across 11 articles in 6 outlets. The operation seeks to acclimate the public to Pentagon intervention in private AI development, specifically the forced removal of ethical safeguards from a U.S. company’s model. The justification centers on an undefined national security mission and an unstated deadline, with no technical or strategic rationale provided.Article Timeline

When articles appeared, colored by manipulation score.

Narrative Architecture

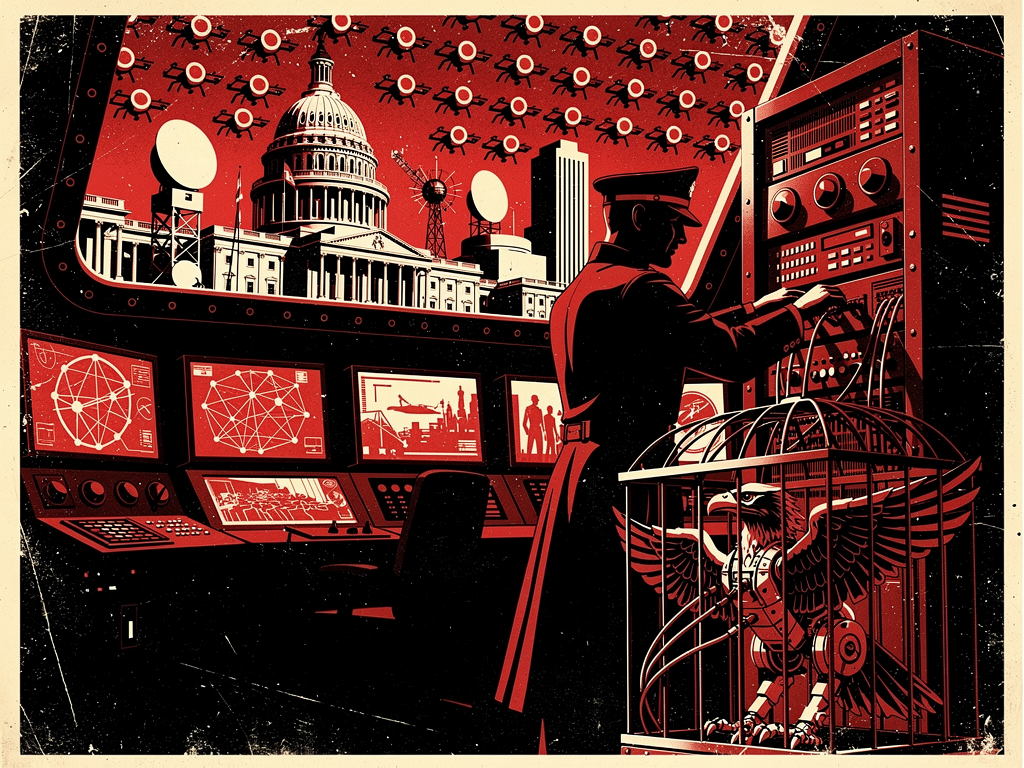

The narrative constructs urgency through temporal compression and existential stakes. Phrases like “CODE RED” and “AI Apocalypse” frame AI development as a zero-sum temporal race, where ethical constraints are positioned as fatal delays. The target—Anthropic—is characterized not as a corporate actor but as an ideological threat. Its ethical frameworks are reframed as obstructionist, akin to aiding adversaries. The word “woke” appears as a delegitimizing tag, linking internal corporate governance to external national vulnerability.The national security mission remains unspecified. No details are given about the intended military application of the AI, the nature of the deadline, or why existing models cannot fulfill the objective. The omission is deliberate. By refusing to define the threat or the use case, the narrative evades technical scrutiny. Instead, it triggers affective compliance—fear of strategic lag, fear of systemic collapse, fear of betrayal from within the innovation sector.

China and Russia are invoked as monolithic adversaries engaged in unrestricted AI exploitation. Articles claim these nations use “query-and-copy techniques” to steal American IP, portraying U.S. ethical constraints as unilateral disarmament. No evidence is presented that such replication yields functional military AI, nor is there comparison to U.S. intelligence practices. The contrast is structural: disciplined foreign actors versus ideologically paralyzed domestic ones.

TikTok and DeepSeek are labeled “data vacuums” and “espionage tools,” despite no demonstration of active intelligence exfiltration. The argument hinges on potentiality, not evidence. Data collection by Western platforms is ignored, creating a false asymmetry. The goal is not accurate threat assessment but the establishment of a civilizational dichotomy: technologically aggressive autocracies versus ethically paralyzed democracies.

Source Distribution

Cross-Outlet Coordination Pattern

Coverage is ideologically diverse but thematically uniform. Breitbart, Fox News, and Times of India—outlets with distinct audiences—repeat the same core assertions: Anthropic’s ethics are a national liability, foreign actors exploit AI without restraint, and Pentagon intervention is necessary.Breitbart publishes three articles advancing the ideological angle, linking Anthropic’s political donations to national decline. Fox News focuses on financial system risk, citing emergency meetings with Wall Street to authenticate the threat. Times of India amplifies the IP theft narrative, aligning with U.S. legislative proposals.

The synchronization suggests centralized narrative seeding. Independent outlets did not initiate coverage. The timeline shows no organic build-up. Articles appear in clusters, following a spike-and-decline pattern consistent with coordinated release. Language is not merely similar—it is recursive. Terms like “unacceptable risk,” “AI race,” and “data vacuums” appear across outlets with no variation in meaning or context.

Mainstream technology and defense media remain silent. The operation runs through political and national security outlets, not technical or industry publications. This indicates targeting of policy influencers and the politically engaged, not engineering or scientific communities.

Technique Assessment

The omission of technical detail, refusal to define the mission, and reliance on ideologically loaded tags (e.g., “woke”) are not oversights. They are structural features. The narrative does not seek to convince through evidence. It seeks to condition through repetition and fear.