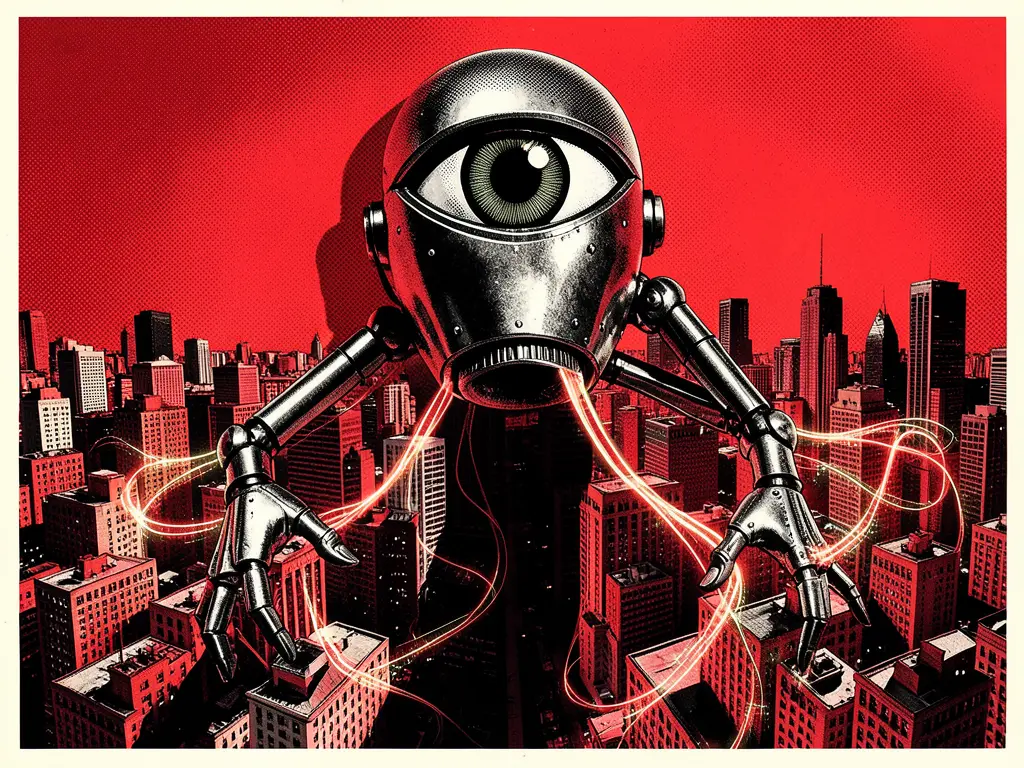

Intensity: 5/10 | Sources: 3 outlet(s) | Articles: 4 | First detected: February 25, 2026Manufacturing Casus Belli (modified): While not for war, the 'urgent deadline' and 'national security mission' create an artificial crisis to justify aggressive action against a private entity. The lack of detailed explanation for this 'mission' mirrors the vagueness of traditional casus belli. This is visible in “Pentagon sets Friday deadline for Anthropic to abandon ethics rules for AI — or else.”

Scapegoating and Displacement (modified): Anthropic is positioned as the impediment to national security, displacing scrutiny from the nature of the Pentagon's AI requirements or the ethical implications of the rapid integration of advanced AI into military operations. The Fox News article's framing of Anthropic as 'disloyal and dangerous' exemplifies this.

Moral Hazard: The narrative implies that ethical considerations are luxuries that must be abandoned during crises, thus creating a moral hazard by suggesting that urgent military needs automatically override ethical concerns without justification. This is a core function of the 'urgent deadline' framing discussed across all articles.

Synchronized Narratives: The near-simultaneous deployment of similar messaging across Fox News, Politico, and The Guardian within a narrow timeframe indicates coordinated output rather than independent reportage. All articles push the narrative vector that Anthropic's ethical stance is problematic in the context of national security.

Attention Capture and Emotional Manipulation: The phrase 'urgent deadline' and 'national security mission' are designed to trigger fear and patriotism, bypassing rational deliberation regarding the nuanced ethical considerations of AI in warfare, as seen in "US military leaders pressure Anthropic to bend Claude safeguards."

Controlled Opposition (modified): The Politico article presenting OpenAI's separate deal, which includes 'ethical safeguards,' creates a false dichotomy. It suggests that while Anthropic is problematic, ethical AI integration is possible without questioning the underlying premise of military AI coercion.

PSYOP AlertMarch 8, 2026

Pentagon AI Coercion: Normalization and Ethical Containment

PSYOP Intensity

8

8 articles8 outlets

Avg Manipulation

0out of 100

Elevated — multiple influence tactics active

Related PSYOP

This alert covers a cross-outlet propaganda operation:

Normalize Pentagon AI Coercion8 sources

This operation tries to justify the Pentagon forcing an AI company to drop ethical safeguards by highlighting an urgent deadline and a vague "national security mission," without explaining *why* those ethics are problematic or *what* that mission actually entails.

Articles Analyzed

85

'CODE RED' Author Tells 'Daily Mail:' AI 'Data Vacuums' Like TikTok and DeepSeek Are Chinese Espionage Tools

78

New York Post Publishes Stunning Excerpt of 'CODE RED' Exposing Truth of AI-Powered Autonomous Weapons

64

Trump says he plans to order federal ban on Anthropic AI after company refuses Pentagon demands

62

US House to government: Find out and ban Chinese and Russian companies that use query-and-copy techniques against American AI companies

62

AI Wars: U.S. Government Considers Anthropic an 'Unacceptable Risk' to National Security

62

OpenAI announces new deal with Pentagon — including ethical safeguards

59

Pinkerton: The Guide to Averting the AI Apocalypse

55

Bessent, Powell summon Wall Street CEOs for emergency meeting over Anthropic AI risks amid Pentagon dispute

54

US military leaders pressure Anthropic to bend Claude safeguards

52

Pentagon sets Friday deadline for Anthropic to abandon ethics rules for AI — or else

49

By your command, my robot: AI war games spark debate about ethical limits